Listen to this article:

AI adoption accelerated through 2025. The tools became faster, more integrated, and more powerful.

But our people did not follow.

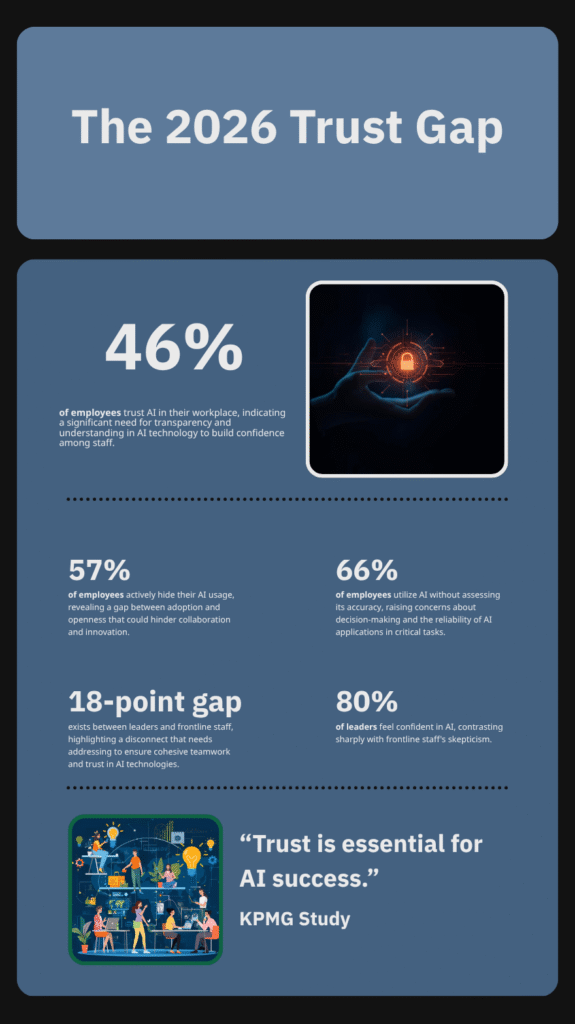

The 2025 KPMG global study on AI trust delivered a critical warning: only 46% of employees are willing to trust AI systems at work.

This means more than half the global workforce actively distrusts the very tools organizations are spending billions to implement. The data is clear. The problem for 2026 is not technology deployment.

It is a systemic, human trust gap.

As we architect our 2026 strategies, this must be our primary focus. We cannot engineer a solution. We must design one through strategic, intentional communication.

The Anatomy of Distrust

This 54% trust deficit is not a single problem. It is a systemic failure, and the evidence from 2025 shows us exactly where the architecture is weak.

It is a failure of transparency. The KPMG study found 57% of employees admit to hiding their AI use, often presenting generated content as their own. This creates a shadow culture where technology is used, but not understood or governed.

It is a failure of capability. 66% of employees use AI tools without evaluating the accuracy of their responses. Only 47% have received any formal AI training. Organizations have given their teams powerful tools with no operating manual, creating a predictable gap in both competence and confidence.

Finally, it is a failure of leadership alignment. There is an 18-point trust disparity between senior leaders and their frontline employees. Leaders (71%) believe AI is being implemented responsibly. Frontline staff (53%) do not.

This is the challenge for 2026. Communicators are not just fighting a tool’s PR problem. They are deconstructing a complex, multi-layered human crisis of trust.

The Architect's Framework: Deconstructing the IoIC Charter

To rebuild, we need a blueprint. The Institute of Internal Communication’s (IoIC) 2025 AI Ethics Charter provides the essential framework for a human-centered 2026 strategy.

It is not a technical manual. It is a strategic guide for communicators.

Deconstructing its core principles reveals the four pillars required to close the trust gap.

1. Quality and Verification The charter insists that all AI-generated content must be verified to sustain organizational trust and accuracy. The Architect’s Insight: This moves “fact-checking” from a simple task to a core strategic function. It establishes the communication team as the ultimate guarantor of truth.

2. Safety and Privacy This principle prioritizes the safety and privacy of all stakeholders. The Architect’s Insight: Trust is impossible without safety. Communicators must design systems that are not just compliant with data ethics, but transparent about how employee data is being used and protected.

3. Human Skills and Agency AI must complement human creativity and critical thinking, not undermine them. The Architect’s Insight: This is the antidote to fear. The 2026 narrative must shift from “AI replacing jobs” to “AI augmenting skills.” This empowers employees as pilots of the technology, not victims of it.

4. Trust and Truthfulness The charter aligns AI adoption with the core professional principles of all communication: being truthful, fair, inclusive, and respectful. The Architect’s Insight: This is the foundation. Technology does not change our core purpose. It simply provides a new, powerful context for it.

Blueprint for 2026: Moving from Policy to Practice

A framework is only powerful if it is built. Here is the blueprint for translating the IoIC’s principles into a practical, trust-building communication system for 2026.

1. Design for Total Transparency The 57% of employees hiding their AI use are not a compliance problem. They are a transparency failure. The 2026 Action: Stop “shadow AI” by creating clear, simple, and accessible usage policies. Communicate how and why AI is being used. If an AI tool is used to monitor performance, state it. If it is used to draft internal comms, disclose it. Trust begins when secrecy ends.

2. Architect the Augmentation Narrative The “replacement” narrative is driven by fear and uncertainty. The 47% of employees without training are a clear sign of this. The 2026 Action: Proactively build a new narrative around augmentation. Frame AI as a tool that elevates human skills. Launch dedicated training programs that focus on critical thinking, strategic oversight, and creative prompting. Position your people as pilots of the technology, not passengers.

3. Mandate Human-in-the-Loop Governance The 66% of employees not evaluating AI responses is a direct threat to organizational quality and credibility. The 2026 Action: Embed human verification into the workflow. The communication function must act as the final checkpoint. No critical internal or external message should be published without explicit, critical human review. This is non-negotiable.

4. Build a Leadership-Led Trust Model The 18-point trust gap between leaders and staff is the most dangerous fracture. The 2026 Action: Leaders must model ethical AI use publicly. They must be the first to complete the training and the first to follow the transparency policies. When leaders demonstrate their own trust in the system, employees will follow.

Conclusion

The data from 2025 is a clear mandate. Technology cannot solve a human problem.

The 46% trust gap is not a failure of AI. It is a failure of communication design.

For 2026, the imperative is clear. We must stop managing a technical rollout and start architecting a human-centered system. The IoIC charter gives us the blueprint.

Our job is to build it.